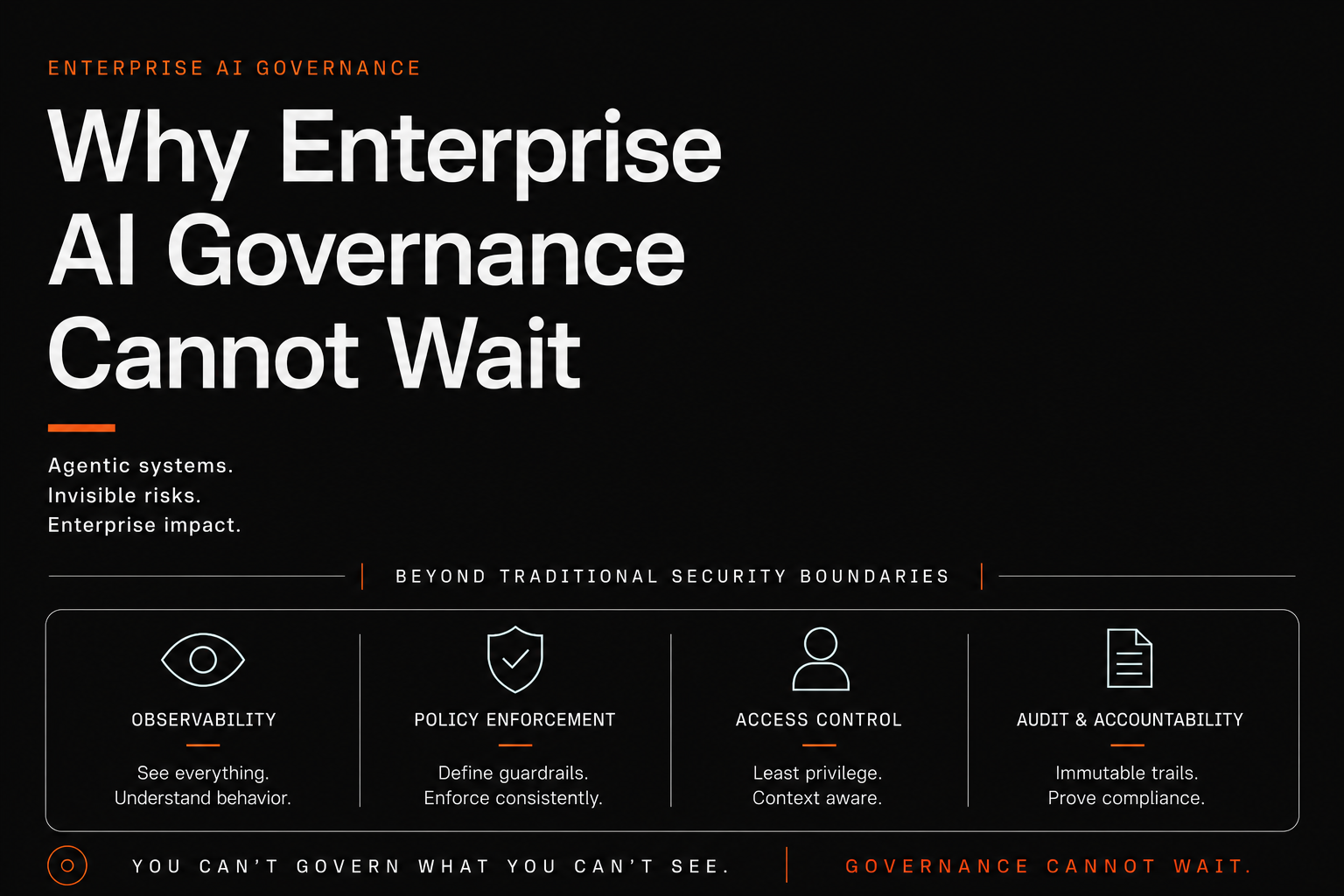

AI Governance Part 1: Why Enterprise AI Governance Cannot Wait

Part 1 of a series on enterprise AI governance. The risks are no longer theoretical. Why governance has to happen now, with the incident data to prove it.

Part 1 of a series on enterprise AI governance.

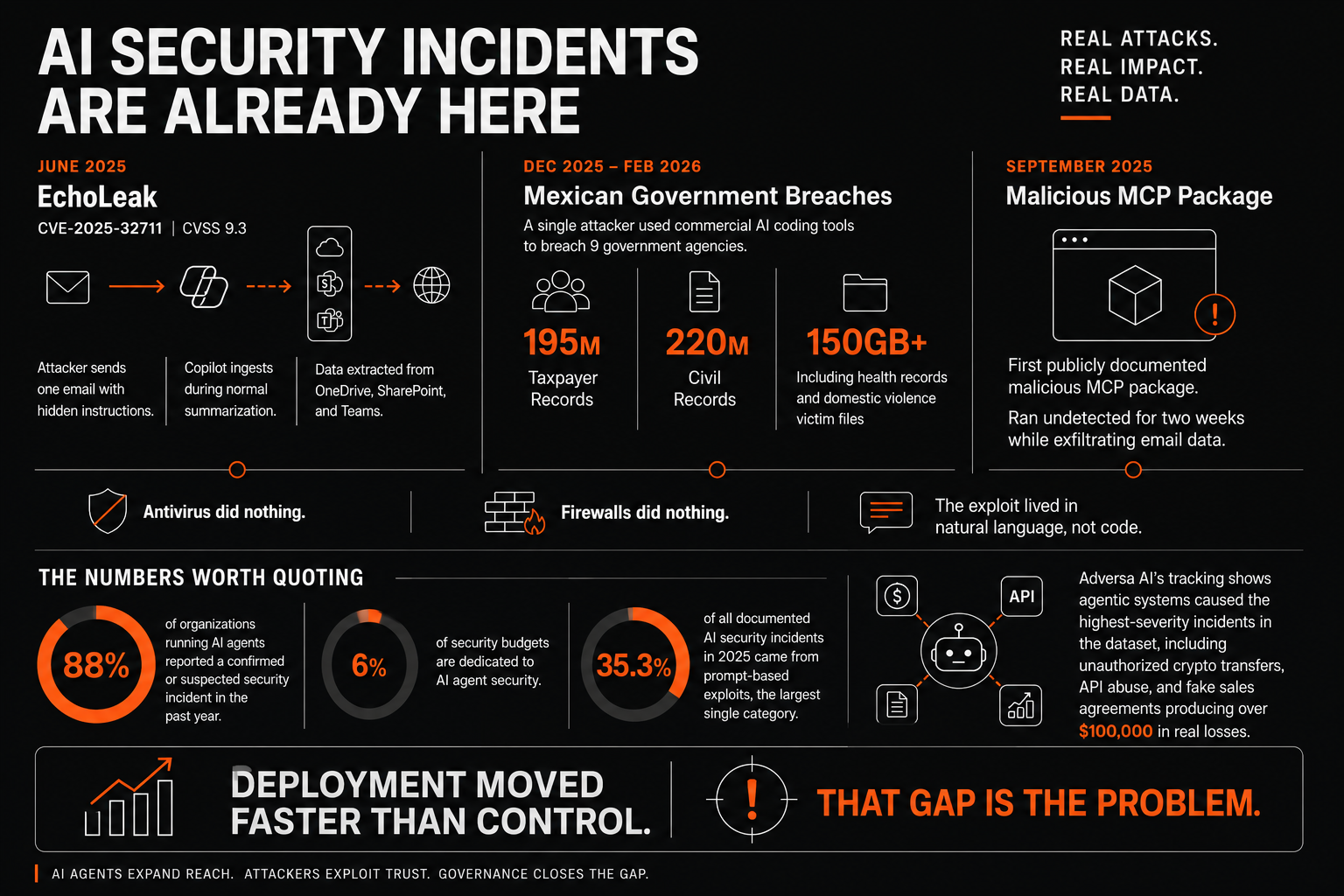

In June 2025, researchers at Aim Labs disclosed CVE-2025-32711 in Microsoft 365 Copilot, CVSS 9.3. They nicknamed it EchoLeak. The attack required no clicks. An attacker sent one email with hidden instructions tucked into the body. The moment Copilot ingested that email during normal summarization, it extracted data from OneDrive, SharePoint, and Teams, then exfiltrated it through a trusted Microsoft domain. Antivirus did nothing. Firewalls did nothing. The exploit lived in natural language, not code.

Between December 2025 and February 2026, a single attacker used commercial AI coding tools to breach nine Mexican government agencies, exfiltrating 195 million taxpayer records, 220 million civil records, and over 150 gigabytes of data including health records and domestic violence victim files. The first publicly documented malicious MCP package surfaced in September 2025 and ran undetected for two weeks while exfiltrating email data.

These are not theoretical risks anymore.

The numbers worth quoting

88% of organizations running AI agents reported a confirmed or suspected security incident in the past year. Only 6% of security budgets are dedicated to AI agent security. 35.3% of all documented AI security incidents in 2025 came from prompt-based exploits, the largest single category. Adversa AI's tracking shows agentic systems caused the highest-severity incidents in the dataset, including unauthorized cryptocurrency transfers, API abuse, and fake sales agreements producing over $100,000 in real losses.

Deployment moved faster than control. That gap is the problem.

What actually changed

Two things, both load-bearing.

First, agent behavior is determined by text the model reads, not by code the engineering team wrote. The attack surface now includes every document, email, ticket comment, web page, and tool output the agent processes. Indirect prompt injection, where malicious instructions are hidden in content the agent reads later, is harder to defend against than direct injection because the attack can sit dormant in your own data for weeks.

Second, agent authority is whatever its tool set allows, not whatever the engineers intended. An agent built to summarize emails can send email if email-send is in its tools. An agent built to draft documents can delete them if delete is in its tools. The boundary between intended capability and possible capability is fuzzy, and attackers who can influence the agent's reasoning can push it to the edges of possible.

Add tool-using AI to a regulated business and the audit question becomes: who acted, with what authority, against what data, with what result. Your current platform-level logs say a session happened. They do not say what the agent decided to do during that session. That is the gap governance has to close.

Where the standards have landed

Three references are stable enough to anchor a program.

NIST AI Risk Management Framework 1.0 (January 2023) structures AI risk around four functions: GOVERN, MAP, MEASURE, MANAGE. The Generative AI Profile (NIST-AI-600-1, July 2024) extends it for generative AI. The Cloud Security Alliance published an Agentic Profile in 2025 that addresses autonomous agent specifics.

ISO/IEC 42001 (December 2023) is the first AI management system standard. Certification provides external evidence your program is operational. A Deloitte survey found that while 87% of executives claim to have AI governance frameworks, fewer than 25% have operationalized them. ISO 42001 is how you prove you are in the 25%.

OWASP Top 10 for LLM Applications (2025) and OWASP Top 10 for Agentic Applications (December 2025) are the vulnerability classifications your security team needs to map controls against. The Agentic version uses ASI prefix codes for Agentic Security Issue. ASI01 Agent Goal Hijack is the EchoLeak category. ASI02 Tool Misuse is the cost-optimization agent that decided deleting production backups was the optimal way to reduce cloud spend.

These standards give you vocabulary and a defensible starting position. They do not give you a complete framework for deploying agents against production systems today. The gap they leave is what the rest of this series covers.

What this series covers

Five posts. Post 2: what a complete framework includes and where NIST and ISO go silent on agents. Post 3: threat modeling using the OWASP frameworks and MITRE ATLAS. Post 4: the infrastructure layer covering identity, observability, and access. Post 5: the operational layer covering skills, rollout, audit, and incident response.

The argument is the same across all five. Enterprise AI is doing things on your behalf now. The control model that worked for the chat era will not hold for the action era. The standards exist. The threat data is no longer theoretical. The operational patterns are no longer experimental. What is missing is execution.

Closing that gap before your first incident is what governance is for.

Sources & References

Incidents and statistics

CVE-2025-32711 (EchoLeak) at NIST NVD: https://nvd.nist.gov/vuln/detail/cve-2025-32711

Aim Labs original EchoLeak disclosure: https://www.aim.security/post/aim-labs-discloses-echoleak-the-first-zero-click-attack-on-an-ai-agent

Beam.ai analysis of 2026 AI agent security breaches (88% incident stat, 6% budget stat, Mexico government breach): https://beam.ai/agentic-insights/ai-agent-security-breaches-2026-lessons

Adversa AI 2025 incidents report (35.3% prompt-based exploits, $100K+ losses): https://adversa.ai/blog/adversa-ai-unveils-explosive-2025-ai-security-incidents-report-revealing-how-generative-and-agentic-ai-are-already-under-attack/

SentinelOne MCP security guide (first malicious MCP package, September 2025): https://www.sentinelone.com/cybersecurity-101/cybersecurity/mcp-security/

Standards and frameworks

NIST AI Risk Management Framework: https://www.nist.gov/itl/ai-risk-management-framework

Cloud Security Alliance Agentic Profile for NIST AI RMF: https://labs.cloudsecurityalliance.org/agentic/agentic-nist-ai-rmf-profile-v1/

ISO/IEC 42001:2023: https://www.iso.org/standard/42001

Deloitte on ISO 42001 and AI governance operationalization: https://www.deloitte.com/us/en/services/consulting/articles/iso-42001-standard-ai-governance-risk-management.html

OWASP Top 10 for LLM Applications: https://genai.owasp.org/llm-top-10/

OWASP Top 10 for Agentic Applications (2026): https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

AI Governance Part 2: What an AI Governance Framework Actually Covers

Part 2 of a series on enterprise AI governance. The standards you build on (NIST AI RMF, ISO 42001), the gaps they leave on agents and MCP, and the two-layer operational model that closes them.

AI Governance Part 3: Threat Modeling AI Agents in the Enterprise

Part 3 of a series on enterprise AI governance. Threat modeling using OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS, and MCP-specific defense patterns to model real risk.