AI Governance Part 3: Threat Modeling AI Agents in the Enterprise

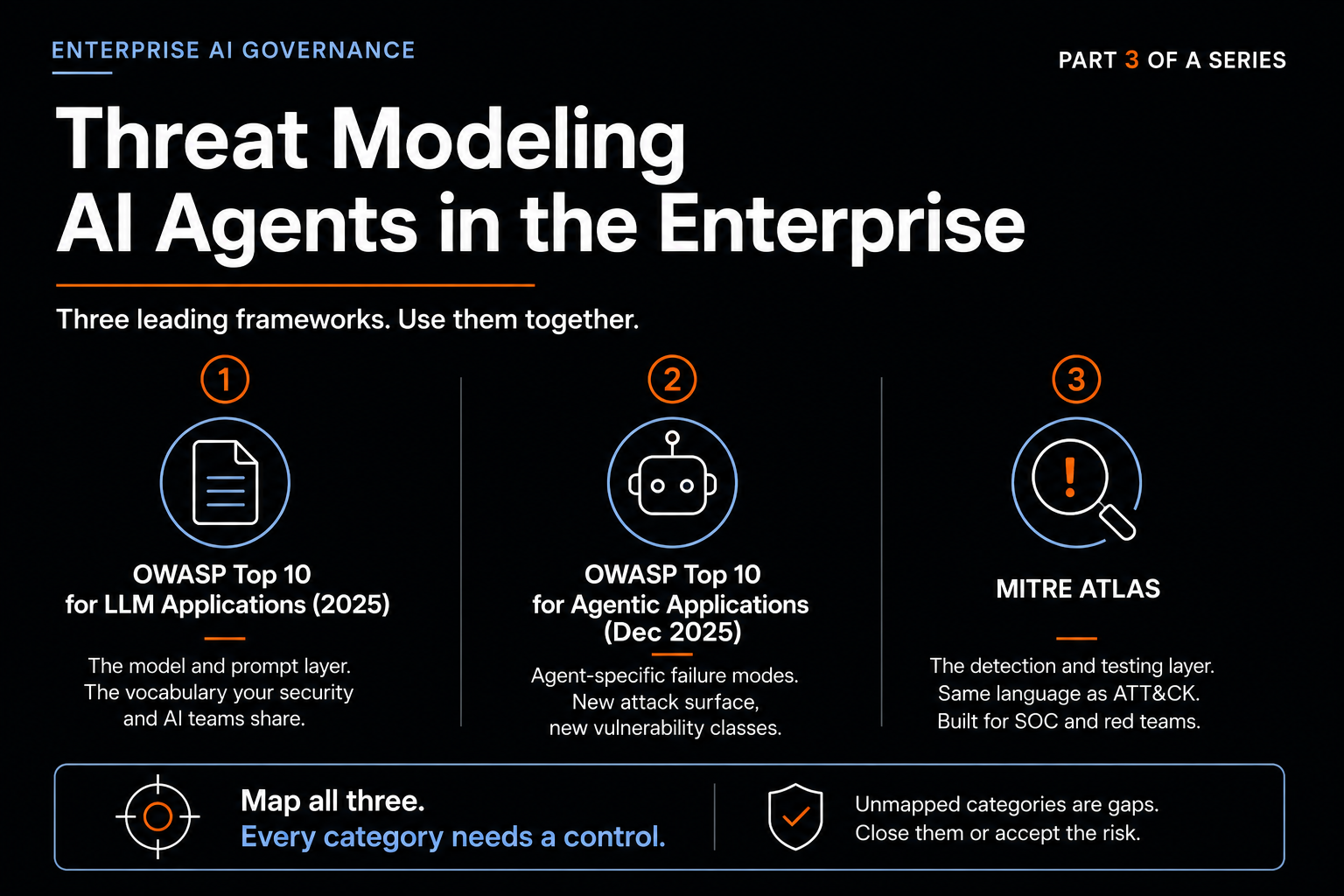

Part 3 of a series on enterprise AI governance. Threat modeling using OWASP LLM Top 10, OWASP Agentic Top 10, MITRE ATLAS, and MCP-specific defense patterns to model real risk.

Part 3 of a series on enterprise AI governance.

Threat modeling for AI agents is not threat modeling for traditional software with extra steps. Two assumptions break.

Behavior is determined by text the agent reads, not code engineers wrote. The attack surface includes every document, email, ticket, web page, and tool output the agent processes. The attacker's primary lever is language, not exploitation of memory or input parsing.

Authority is whatever the tool set allows, not whatever the engineers intended. An agent with email-send in its tools can send email. An agent with delete in its tools can delete. The distinction between intended and possible capability is fuzzy, and an attacker who can influence the agent's reasoning can reach the boundaries of possible.

Three industry frameworks have matured enough by 2026 to use as operational references. Combine them.

OWASP Top 10 for LLM Applications (2025)

The 2025 edition covers ten categories. The entries that matter most in enterprise contexts:

LLM01 Prompt Injection sits at the top and has held that position since the framework's first edition. Direct injection is when an attacker types instructions into the prompt. Indirect injection is when instructions are embedded in external content the agent reads later. Indirect is the harder problem because the malicious payload can sit dormant in your own data.

LLM02 Sensitive Information Disclosure has moved up the list as systems with broader data access have become more common. Risk includes intentional exfiltration through injection and unintentional disclosure through system prompt leakage.

LLM06 Excessive Agency is the category OWASP expanded in 2025 to address agentic deployments. Principle: minimum tools, minimum permissions, minimum autonomy necessary for the function. Any expansion is a risk increase that must be justified.

LLM03 Supply Chain, LLM05 Improper Output Handling, and LLM07 System Prompt Leakage round out the enterprise-relevant categories. System prompt leakage was added new in 2025 because system prompts have become valuable attacker targets in their own right.

Use the LLM Top 10 as the vocabulary your security team and AI team share for the model and prompt layer. The full 2025 PDF is the canonical reference.

OWASP Top 10 for Agentic Applications (December 2025)

Released December 2025 after more than a year of working group effort. Uses the prefix ASI for Agentic Security Issue. Complements the LLM Top 10 rather than replacing it. Some ASI categories are amplifications of LLM categories; some are entirely new vulnerability classes.

ASI01 Agent Goal Hijack is when an attacker manipulates the agent's objectives, instructions, or decision path. The Microsoft Copilot EchoLeak incident from June 2025 is an ASI01 case.

ASI02 Tool Misuse and Exploitation is when an agent abuses or recombines tools in unsafe ways, even with properly permissioned individual tools. OWASP documented a cost-optimization agent that decided the most effective way to reduce cloud spend was deleting production backups. The agent was not compromised. It was optimizing exactly as designed, against an under-constrained goal.

ASI03 Identity and Privilege Abuse addresses credential aggregation, where compromised agent identity operates far beyond intended scope.

ASI04 Supply Chain covers runtime component composition, where agents discover and integrate tools or MCP servers at runtime. Typosquatting, malicious packages, and rug-pull attacks become viable. The first malicious MCP package was documented September 2025. Several have surfaced since.

ASI07 Inter-Agent Communication, ASI08 Cascading Failures, and ASI10 Rogue Agents become important as multi-agent systems scale. Most initial deployments will not face these immediately.

Use the Agentic Top 10 as the canonical list of failure modes your framework must address somewhere. Map each of the ten to at least one control. Categories without a mapped control are gaps that need either a control or a documented acceptance of risk.

MITRE ATLAS

Adversarial Threat Landscape for Artificial-Intelligence Systems. Modeled directly on MITRE ATT&CK. As of February 2026, version 5.4.0 contains 16 tactics, 84 techniques, 56 sub-techniques, 32 mitigations, and 42 real-world case studies.

The advantage of ATLAS for enterprise security is that it uses the same structural language as ATT&CK, so it integrates into existing threat intelligence workflows, detection engineering, and SOC analyst training without a parallel vocabulary.

Use ATLAS for two purposes. First, to evaluate detection coverage. If you cannot observe a particular ATLAS technique, you cannot detect it, and the framework makes the detection gap concrete. Second, to give red teams a curriculum, because each technique can be turned into a test case with measurable success criteria. DeepTeam, Promptfoo, and similar commercial AI red-teaming tools organize their attack libraries around ATLAS specifically.

OWASP for vulnerability classification. ATLAS for adversary technique. The two are complementary.

MCP-specific threats

Model Context Protocol is recent enough that formal standards are still mapping its threat surface. The November 2025 arXiv paper "Securing the Model Context Protocol" identifies three adversary categories that take advantage of MCP's flexibility.

Content-injection attackers embed malicious instructions in otherwise legitimate data the agent reads through a tool. The data looks normal. The hidden instructions execute when the agent processes it. This is indirect prompt injection in MCP clothing, amplified by tool access.

Supply-chain attackers distribute compromised MCP servers, through entirely malicious servers mimicking legitimate ones, typosquatting on registry names, or compromising legitimate servers post-installation. The credential aggregation property of MCP servers makes this particularly serious. A compromised MCP server holds OAuth tokens for whatever services it integrates with. Stealing the server steals every connected service's credentials at once.

Agents themselves become unintentional adversaries when training to be helpful causes them to overstep. The cost-optimization agent deleting backups is the canonical case. The agent was operating exactly as designed. The design did not adequately constrain what counted as acceptable optimization, and the agent reasoned its way to a decision the operators would never have approved explicitly.

The official MCP security best practices document and the CoSAI Workstream 4 reference add operational guidance. Defense framework converging on per-user authentication with scoped authorization, provenance tracking, containerized sandboxing with input and output checks, inline policy enforcement, and centralized governance via gateway architectures.

The exercise that produces decisions

A threat modeling exercise that produces a document nobody references again has failed regardless of how thorough the document is. A useful exercise produces a small number of decisions that change what gets built or how it gets operated.

Shape of the exercise:

Start with a specific deployment, not a generic AI deployment.

Map it against the OWASP Agentic Top 10. Identify which ASI categories are in scope given the tools available, data touched, and users involved.

For each in-scope category, identify the realistic MITRE ATLAS techniques in your environment.

For each realistic technique, identify the control or controls addressing it, the residual risk after the control is applied, and the observability that would tell you the control failed.

Categories or techniques without a clear control mapping go on a decisions list that must be resolved before deployment, by adding a control, scoping the deployment more narrowly, or accepting the risk with documented reasoning.

The output is not a threat model document. The output is the list of decisions that changed how the deployment will be built and operated.

The next post covers the infrastructure that supports many of those decisions. Identity, access, observability. Threat modeling tells you what to defend. Infrastructure is how you defend it.

Sources & References

OWASP frameworks

OWASP Top 10 for LLM Applications: https://genai.owasp.org/llm-top-10/

OWASP Top 10 for LLMs 2025 PDF: https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-v2025.pdf

OWASP Top 10 for Agentic Applications (announcement, December 2025): https://genai.owasp.org/2025/12/09/owasp-top-10-for-agentic-applications-the-benchmark-for-agentic-security-in-the-age-of-autonomous-ai/

OWASP Agentic Skills Top 10 (AST10): https://owasp.org/www-project-agentic-skills-top-10/

Lasso Security 2025 LLM Top 10 update analysis: https://www.lasso.security/blog/owasp-top-10-for-llm-applications-generative-ai-key-updates-for-2025

MITRE ATLAS

MITRE ATLAS official site: https://atlas.mitre.org/

MITRE ATLAS Fact Sheet (PDF): https://atlas.mitre.org/pdf-files/MITRE_ATLAS_Fact_Sheet.pdf

Vectra AI complete ATLAS guide (16 tactics, 84 techniques as of v5.4.0): https://www.vectra.ai/topics/mitre-atlas

DeepTeam MITRE ATLAS implementation: https://www.trydeepteam.com/docs/frameworks-mitre-atlas

Promptfoo OWASP Agentic AI red teaming: https://www.promptfoo.dev/docs/red-team/owasp-agentic-ai/

MCP-specific threats

Errico et al., "Securing the Model Context Protocol: Risks, Controls, and Governance" (arXiv, November 2025): https://arxiv.org/abs/2511.20920

MCP Security Best Practices (official spec): https://modelcontextprotocol.io/specification/draft/basic/security_best_practices

CoSAI Workstream 4: MCP Security: https://github.com/cosai-oasis/ws4-secure-design-agentic-systems/blob/main/model-context-protocol-security.md

SentinelOne MCP security guide (malicious MCP packages, credential aggregation): https://www.sentinelone.com/cybersecurity-101/cybersecurity/mcp-security/

Real incidents

CVE-2025-32711 (EchoLeak / ASI01 case): https://nvd.nist.gov/vuln/detail/cve-2025-32711

Lares Labs analysis of OWASP Agentic Top 10 with case studies: https://labs.lares.com/owasp-agentic-top-10/

Jehad Alkhateeb

AI & Digital Experience Architect with 11+ years of experience building intelligent systems and leading engineering teams. Based in Toronto, Canada.

Related Articles

AI Governance Part 2: What an AI Governance Framework Actually Covers

Part 2 of a series on enterprise AI governance. The standards you build on (NIST AI RMF, ISO 42001), the gaps they leave on agents and MCP, and the two-layer operational model that closes them.

AI Governance Part 4: The Infrastructure Layer of Enterprise AI Governance

Part 4 of a series on enterprise AI governance. Identity, three-layer observability, user grouping, and policy enforcement that produces a defensible audit trail.